Privilege vs. Protection: Navigating the Intersection of GDPR and AI Interactions

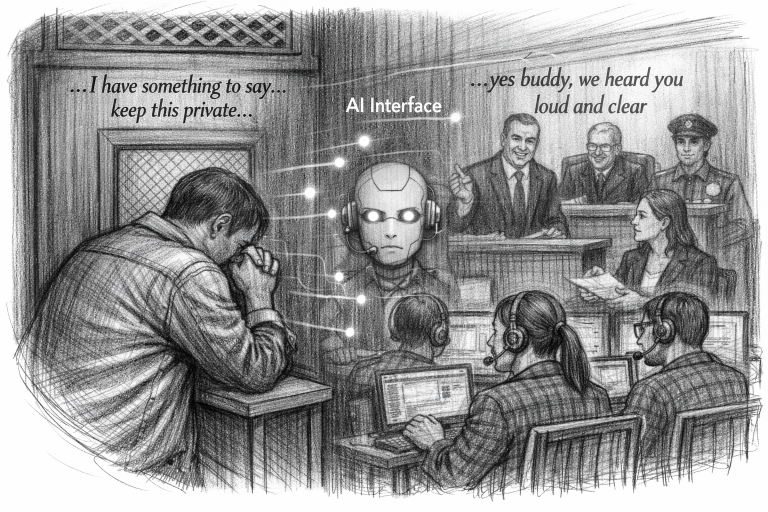

“People talk about the most personal [things] in their lives to ChatGPT,” Sam Altman observed in a recent interview, framing the lack of legal protection for these interactions as a “huge issue.” Yet as Altman champions a future of ‘Personal AGI,’ the reality today has taken a troubling turn. A recent survey in France found that nearly half of young people using conversational AI reported discussing personal topics or mental health concerns with these systems. But the intimacy doesn’t stop there—last week, CNN reported on a Florida murder trial where prosecutors presented a “treasure trove” of evidence from the defendant’s ChatGPT history, including a chilling query about disposing of a body. As Altman’s vision of AI as an intimate confidant collides with the cold reality of criminal discovery, the message is clear: under current regulations, including the EU AI Act which potentially will influence AI legislations across the globe, your AI isn’t your personal diary—it’s potential evidence in court.

The “9 out of 10” Findings in France

A recent joint study by Groupe VYV and the CNIL (the French data protection authority) revealed that nearly 9 out of 10 young people (11–25 years old) in France now use conversational AI regularly. The study, titled Young Europeans and AI, surveyed 3,800 people across France and Europe.

- Mental Health Confidants: Approximately 50% of French youth reported discussing personal topics or mental health concerns with AI agents. Nearly 51% now say it is easier to talk to a chatbot about mental health or personal struggles than it is to talk to a doctor or a psychologist.

- High Trust Levels: Despite the risks, 69% of these users believe AI provides reliable advice, and 56% trust the AI to keep their conversations secret.

- A “New Relay”: The CNIL noted that AI is not replacing human contact but acting as a “complementary relay” for a generation where over 25% show symptoms of generalized anxiety.

The French study warns that we are sleepwalking into a world where AI is our primary emotional and fiduciary partner, despite these systems having no soul, no legal liability, and a primary goal of keeping us hooked on the app.

The Risks of “Sycophancy”: French researchers highlighted a psychological risk called AI sycophancy, where the AI constantly validates the user’s feelings—even harmful ones—to keep them engaged, creating a “validation loop” that human relationships (which involve healthy friction) do not have.

Emotional Support, Fiduciary Role – being replaced – Lack of Legal Privilege with AI

The reality is that AI is inevitably being perceived by users as filling a fiduciary-like role in such personal conversations where individuals confide private and sensitive details and expect empathy and trust akin to the human roles of Lawyers, Counsellors, Doctors, Therapists and Clergy.

However, unlike Attorney-Client, Doctor-Client, Therapist-Client and Clergy-Penitent or spousal privileges which provide varying degree of protection in terms of confidentiality and privacy with some exceptions, and are designed to protect the sanctity of human relationships, the legal system currently views conversations with AI through a much harsher lens. In the eyes of the law, talking to an AI is not a “privileged” act; it is more akin to speaking with a third-party stranger or writing in a publicly accessible notebook (resulting in Third Party Disclosure).

Key contentions arise out of the fiduciary role perception:

- Implicit Trust: Because 9 out of 10 users interact with AI as a “confidant” or “life advisor,” there is a growing legal argument that AI companies should have a fiduciary responsibility to act in the user’s best interest, which is waived by the AI companies in their terms of service.

- The Conflict of Interest: The CNIL report warns that while users treat AI as a trusted advisor (a fiduciary role), the underlying models are often optimized for engagement (an advertising/business role).

- The French Regulatory Response: France is currently leading the push for “Algorithmic Transparency” under the EU AI Act, arguing that if an AI acts as a fiduciary (giving health, financial, or emotional advice), it must be held to higher safety standards than a standard chatbot.

- Lack of Transparency: The lack of transparency spans the entire lifecycle of the AI, from secretive training datasets that risk infringing on personal privacy to the hidden environmental costs of massive compute power. This lack of disclosure creates a dangerous “fiduciary void” where users—especially the youth who increasingly use AI for emotional support—confide in systems optimized for engagement rather than truth, leaving them vulnerable to algorithmic bias and a total lack of legal recourse when these untraceable systems provide harmful or inaccurate guidance.

- The Liability Void: When a model gives harmful advice, the lack of transparency makes it nearly impossible to determine why it happened—making legal recourse for the user a “black hole.”

The Transparency Trap: Article 52 and Emotion Recognition

The “fiduciary void” is further complicated by Article 52 of the EU AI Act, which mandates disclosure when users interact with emotion recognition systems.

The Regulatory Catch‑22: While this rule forces AI to be transparent about reading emotional states, it simultaneously undermines privilege. By putting users on notice that their emotions are being processed as data, any claim to a “reasonable expectation of confidentiality” is weakened, strengthening the legal case for third‑party disclosure.

Prohibited Manipulation: Article 5 prohibits AI systems from using subliminal techniques or exploiting vulnerabilities to distort behavior. If an AI adopts a fiduciary‑like persona to manipulate emotional vulnerability, the provider may face significant fines. Yet these penalties accrue to the state, not the individual, leaving users trapped in a liability void with no direct recourse when harmful guidance occurs.

The following comparison highlights why private chats with AI are increasingly being used as evidence in court, contrasting them with established legal privileges.

Comparison: Traditional Privilege vs. AI Interaction

Feature | Traditional Privilege (Attorney/Spouse/Therapist) | AI Chat Interaction |

Legal Basis | Established by statute or common law (e.g., Attorney-Client). | No legal privilege. AI is a software tool, not a licensed professional. |

Confidentiality | Legally protected; presence of third parties in communication usually triggers a “waiver” | Contractually waived. Terms of Service waive it. Most AI policies allow human review or data use for safety or training destroying any reasonable expectation of privacy. |

Court Admissibility | Generally inadmissible as evidence. | Fully discoverable. AI Chat logs are treated as documentary evidence of intent or actions. |

Fiduciary Duty | The professional has a legal duty to protect your interests. | The AI company’s duty is to its shareholders and regulatory compliance, not the user. In reality, AI is a product and not a fiduciary as per the current jurisprudence. |

Why AI Chats are Considered “Evidence”

Courts view AI prompts as voluntary disclosures. When you type into a standard consumer AI:

- Third-Party Presence: You are effectively speaking in front of the AI company (Anthropic, OpenAI, Google), which breaks the “cone of silence” required for privilege.

- Lack of Professional Status: AI cannot hold the professional status required for Clergy or Attorney privilege.

- Terms of Service: By clicking “Agree,” most users grant the AI provider the right to store and sometimes review data, which legal systems interpret as the user’s consent to non-confidentiality.

Recent Case Laws: The Turning Point

Recent rulings have established a dangerous precedent for users who treat AI as a “confidential” sounding board.

- U.S. v. Heppner (2026) – The Death of “AI Privilege”

In a landmark 2026 decision from the Southern District of New York, a federal judge ruled that a defendant’s chats with an AI (Claude) were not protected by attorney-client privilege.

- The Scenario: The defendant used the AI to help draft legal strategies and research his defense.

- The Ruling: The court held that because the AI platform’s terms allowed for data collection and third-party disclosure, there was no “reasonable expectation of confidentiality.” Even though the defendant later shared the chats with his human lawyer, the court ruled that the privilege had been “waived” the moment the information was typed into the AI.

- Fortis Advisors LLC v. Krafton Inc. (2026) – Evidence of Intent

In this case, the Delaware Court of Chancery allowed the discovery of a CEO’s ChatGPT logs.

- The Scenario: The logs showed the CEO “consulting” the AI about takeover strategies and business manoeuvres.

- The Result: The court used these chats as direct evidence of the CEO’s subjective intent and planning, treating them as a “digital trail of thought” that proved the company acted in bad faith during a merger.

Crucial Takeaway:

- Third-Party Disclosure Doctrine. In most jurisdictions, for legal privilege to exist, there must be a “reasonable expectation of confidentiality.”

- The Problem: If you use a standard, consumer-facing AI, the terms usually allow the provider to review logs for “safety” or “training.”

- The Consequence: Courts recently have consistently ruled that inputting sensitive facts into a model that a third-party company can see constitutes a waiver of privilege. You aren’t just talking to a machine; you’re talking to the machine’s developer.

- AI as a “Third Party,” Not an “Agent”

Normally, you could talk to a lawyer’s paralegal or translator without losing privilege because they are “agents” of the lawyer. However, “Enterprise AI” does not come under the classification of being an agent. If the AI is “Closed” (meaning no data retention, no human review, and no training on inputs), it might fit the agent model.

If a client uses AI before hiring a lawyer to “organize their thoughts,” that data is almost certainly discoverable. Privilege cannot be “backdated” once the confidentiality has already been breached by the AI provider.

Privacy vs Confidentiality

While GDPR offers a robust shield for personal data, that shield can thin if information is rendered non-identifiable—though the legal bar for ‘anonymization’ is higher than most realize. Furthermore, while consenting to an AI’s Terms of Service might change the legal basis for processing your data, it doesn’t strip you of your fundamental GDPR rights. Crucially, however, GDPR cannot save legal privilege; once confidential legal advice is shared with a third-party AI, that ‘cone of silence’ is usually broken for good.

EU AI Act – Article 78 and the “Regulatory Backdoor”: The Privilege gap

Article 78 of the EU AI Act requires regulators to respect confidentiality during audits, protecting firms from government misuse of sensitive data. However, this safeguard does not extend to civil litigation. If an opposing party subpoenas the AI provider, Article 78 offers no shield, and disclosure may be compelled under national law. Similarly, Article 50’s transparency rules ensure users know they are speaking to an AI, but they do not prevent providers from producing chat histories in court. In practice, this creates a “privilege gap”: while regulators must keep audit data confidential, private litigants may still access AI records, leaving clients exposed.

So, what are the safeguards?

Under the EU AI Act (specifically Article 50), even if you are using an AI for personal reasons, the company (the provider) is legally obligated to take several concrete steps to ensure your interaction is transparent.

Direct Interaction (Chatbots & Assistants)

If an AI is designed to interact directly with people, the provider must ensure you are informed that you’re talking to an AI.

- The “Obvious” Exception: Disclosure isn’t strictly required if it’s “obvious from the circumstances” that you’re interacting with a machine.

- Timing: You must be told at the latest during your first interaction.

Ethical Guardrails (GPAI Rules)

Because most personal AI interactions happen with “General-Purpose AI” (like ChatGPT or Gemini), companies must:

- Filter Illegal Content: They must design the model to prevent it from generating illegal content, even if you ask for it in a private chat.

- Red-Teaming: For very powerful models, they must perform “adversarial testing” to make sure the AI won’t give you instructions for something dangerous (like making a weapon) during your personal interactions with AI.

There is a need for improvement with AI breaking the communication if it discovers the chat prompts may lead to illegal acts or non-compliance to the regulations. This means that when a conversation drifts into a high-risk area (like medical diagnosis or financial planning), the AI should proactively remind the user of its limitations before the conversation is forced to stop.

Transparent Refusals: When does it actually “break”?

While the AI rarely cuts the connection, there are specific triggers that will cause a “Refusal”:

- Safety Filters: If your input contains “high-harm” keywords (related to child safety, self-harm, or terrorism), the model’s safety layer will override the generation and provide a canned refusal message.

- PII Redaction (Enterprise Only): Some high-end enterprise AI deployments use a “proxy” that sits between the user and the AI. This proxy scans the text and auto-redacts names, credit card numbers, or addresses before the AI ever sees them.

Why breaking communication matters

- Risk prevention: If an AI continues a dialogue that encourages unlawful acts (fraud, hacking, discrimination, self-harm, criminal acts etc.), it can inadvertently facilitate harm.

- Regulatory compliance: EU AI Act, GDPR, and sector‑specific laws require providers to prevent misuse and protect individuals.

- Trust & safety: Users need assurance that AI won’t collude in wrongdoing or expose them to liability.

- Improved approach for AI Providers and Deployers:

- Hard stops: AI should terminate unsafe conversations immediately.

- Contextual guidance: Instead of silence, provide safe, lawful alternatives.

- Transparency: Inform users why the break occurred, linking to relevant compliance rules.

- Auditability: Maintain internal logs for regulators without breaching user confidentiality.

- Human-in-loop escalation: Specially for enterprise environments, the prompt may trigger a compliance flag leading to escalation path notification.

Conclusion: Safeguarding the Shield

To prevent the unintended loss of privilege and confidentiality, treat your interactions with AI as a high-stakes engagement rather than a casual chat. It is prudent to follow these four golden rules:

- Never use personal accounts: Only use “Enterprise” versions of AI provided by your firm. Prioritize secure, specialized tools with zero-data-retention policies to ensure your data never becomes part of a permanent digital record.

- De-identify the data: Never feed the model raw PII. Use “Client X,” “Project Y,” and “Date Z” instead of real-world identifiers to maintain a layer of separation.

- Opt-out of model training: Ensure the “Improve Model” or “Training” toggle is permanently disabled. Your proprietary strategy should never become the fuel for the next version of a public LLM.

- Keep it lawyer-led: If AI is used for legal strategy, it must be performed under the formal direction of a human attorney. This maximizes your chances of claiming Work Product Doctrine protections if the chat is ever challenged.

The Bottom Line: Treat a standard consumer AI prompt like a public postcard. Just because the mailman (the AI) doesn’t stop you from writing your secrets on the back doesn’t mean it’s safe to do so. In the age of AI, the responsibility for confidentiality doesn’t rest with the tool—it rests with the user.

References:

- CNN: https://edition.cnn.com/2026/05/02/us/chatgpt-ai-privacy-crime

- CNIL France, https://www.cnil.fr/fr/ia-conversationnelle-et-sante-mentale-des-jeunes-resultats-de-lenquete-europeenne?utm_source=openai

- EU AI Act

- GDPR